Think about programs running in virtual machines with a distributed computer service, which would be one of the most critical environments.

If all the programs running there from all clients were programmed, and also the programs of the machine itself to keep the enterprise run time in a way that the buffers containing the critical data were never ever cached and thus never leaked (for example via cache-controllingfunctions), then no matter the currently running flaws in the usage of the cache, the fact is that no critical data would be read and thus a lesser level of harm.

Probably the safest data will always be stored in machines without connection at all to outside networks, so it's something to take into account, but also measures like the ones above could prove definitely effective as expected by the design of the CPU and the behavior inherited by that design.

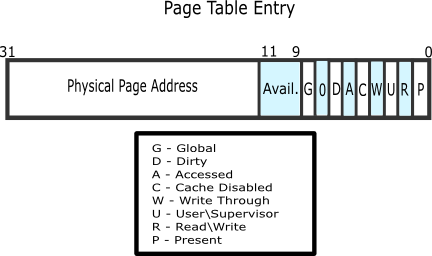

Disabling the cache only for buffers that are so critical that nobody wants to duplicate at unknown random places of a system for safety is not really bad, that's why page entries have a bit to enable or disable caching of that page. It's there for a reason, this was surely thought when the CPU was designed, the designers probably had the notion that in some cases it could be possible to analyze the state of the CPU in ways like this, and the result is things like that cache disabling bit per page.

Look at bit 4 in this page entry: